Aura’s breach was not a “hack” in the traditional sense. No malware, no stolen credentials, no dramatic break-in. Attackers “simply” interacted with a misconfigured and overly permissive Salesforce endpoint, and it returned exactly what they asked for.

In this piece, we break down how it happened step by step inside the Salesforce ecosystem, and the methods ShinyHunters (the group behind the breach) used to turn that exposure into a full-scale data leak and extortion attempt. Together, it points to a broader shift in cybercrime: the rise of “living off the SaaS” - a new and overexposed frontier for data harvesting.

More importantly, we explore why this matters to all of us. CRM systems, often less hardened than core infrastructure, hold exactly the kind of data attackers need: personal details, support history, and real customer interactions. Once exposed, that data becomes fuel for highly convincing scams and identity theft at scale. That is the real danger behind dark web leaks, not just that your data is out there, but how easily it can be weaponized, and why the security of the companies we trust matters more than ever.

When you get one of those alerts, “your data was found on the dark web,” the natural question is simple: how did it get there in the first place?

It might be easy to imagine a an hacker breaking into a system and stealing data like we see in the movies. But modern breaches rarely look like that anymore. Today’s attacks operate more like a supply chain. One group finds exposure, another extracts the data, another turns it into social engineering, and another monetizes it. What used to be a single one-off breach is now a well coordinated ecosystem of dark groups and threat actors.

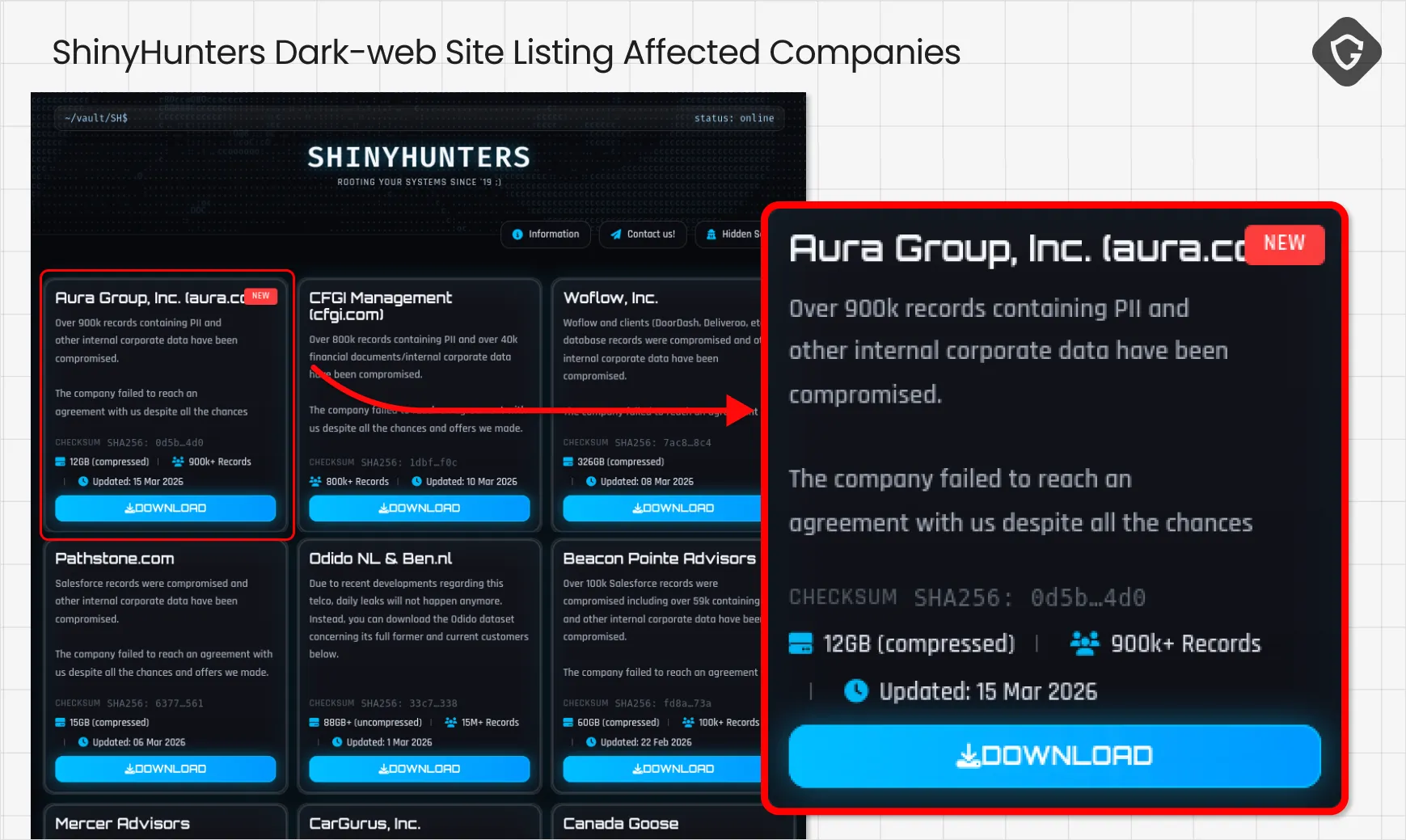

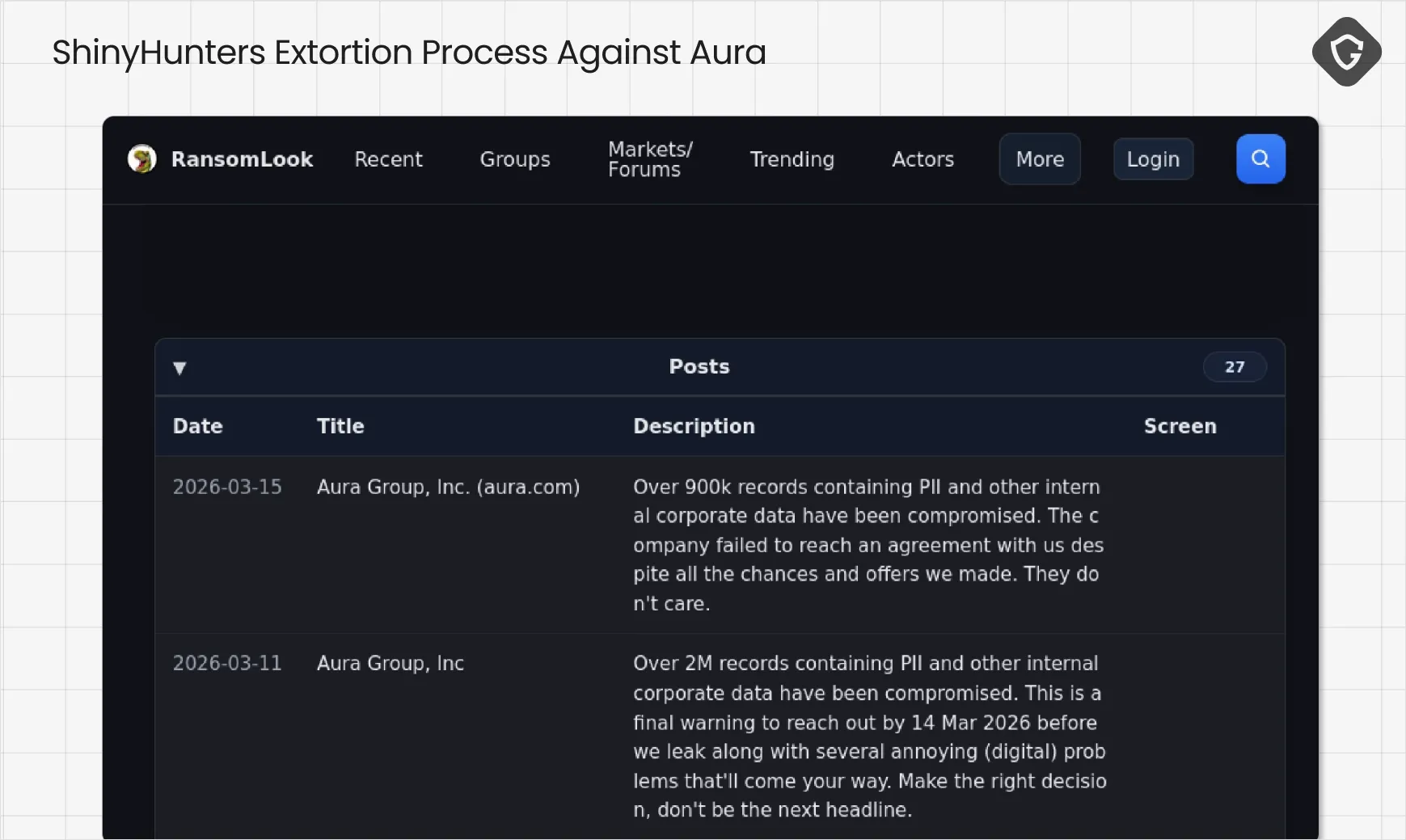

A clear example of this model is ShinyHunters, now operating as part of the SLSH collective, a loose alliance of specialized actors. Some focus on vishing (voice call phishing) and impersonation, others on persistence and extortion, while ShinyHunters excels at large-scale data extraction. Together, they form a pipeline that can quickly turn exposed data into real-world impact.

In the past few days, we saw how this plays out in reality. Data tied to Aura, a company focused on protecting users from online threats and identity theft ended up circulating in underground forums. The irony is obvious, but the method is what matters. There was no malware, no stolen credentials, and no dramatic break-in. The attackers simply interacted with a system that was already more open than intended.

The uncomfortable truth is that the most exposed parts of modern organizations are often the most sensitive ones. Customer support systems, CRMs, and public-facing portals are built for accessibility, but they also hold the exact data that later appears in dark web leaks: personal details, account data, and support histories. This is what attackers call “living off the SaaS,” and they have become very efficient at turning these systems into scalable data sources.

So instead of asking how attackers got in, the better question is how these systems were already open. To answer that, let’s look at how it played out in Aura’s case, and walk through how customer data can move from a legitimate platform straight into the dark web.

And this was exactly the case here, involving Aura and its CRM platform, Salesforce. The data was not “stolen” in the traditional sense. It was quietly scraped from Aura’s Salesforce environment.

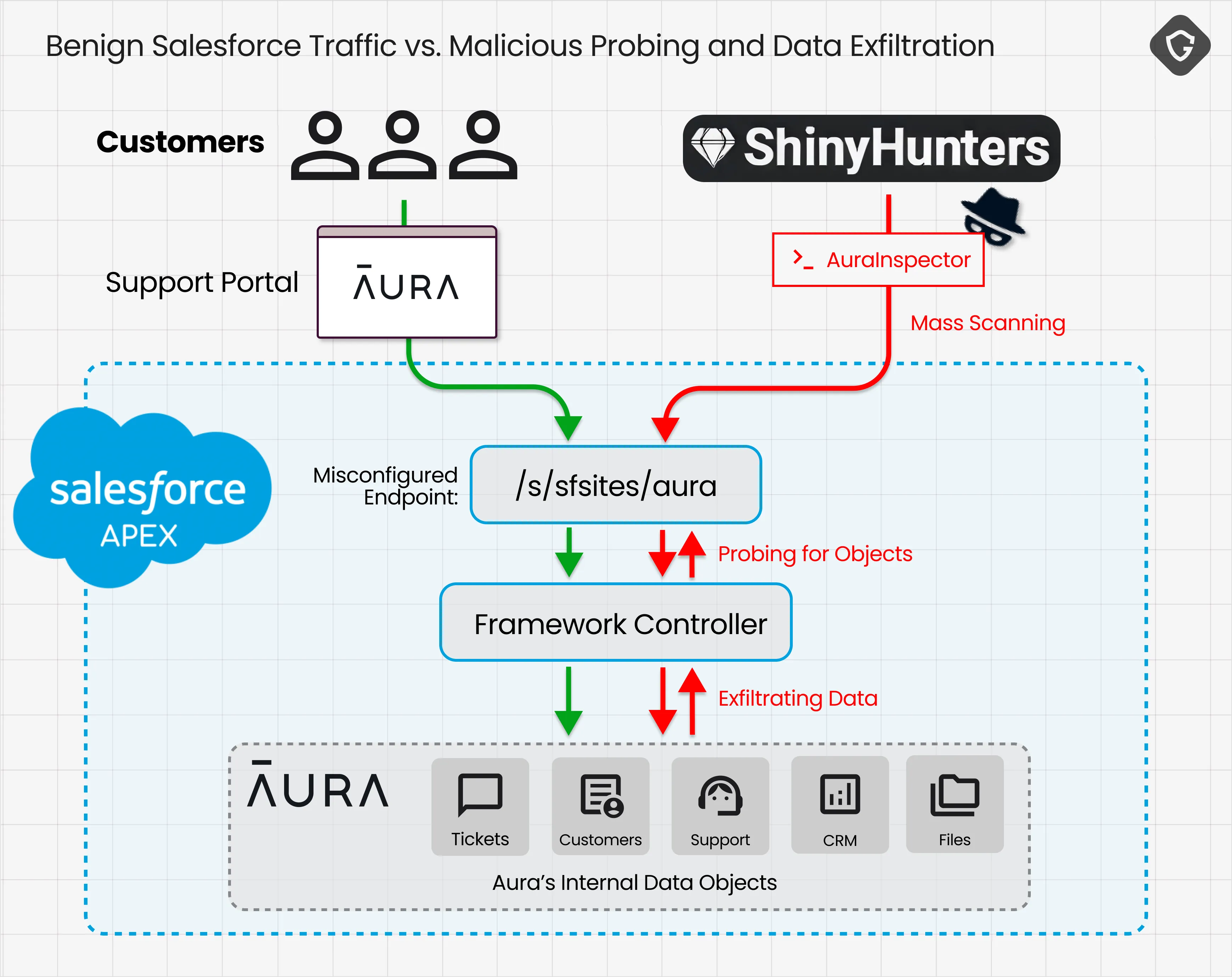

To understand how, we need to look at how Salesforce Experience Cloud works. It powers customer-facing portals like support centers and dashboards, all connected directly to internal CRM data. Every click, page load, or form submission relies on real-time communication between the browser and Salesforce’s backend.

That communication runs through something called the Aura framework. Yes, another “Aura,” completely unrelated to the company, just another layer of irony in this story. This framework exposes a public endpoint that the front-end uses to talk to the backend. It is meant to be accessible. Without it, the portal simply would not function. In practice, it becomes the bridge between what users see and the organization’s internal data.

The critical piece here is the guest user profile. It allows unauthenticated visitors to interact with public parts of the site, but its permissions are fully configurable. And this is where things went wrong. In Aura’s case, and many others affected by this campaign, that profile was given more access than intended. API access was enabled, internal objects were readable, and sharing rules were too broad. Each of these settings might seem harmless on its own, but together they turned a simple interface into something much more powerful.

Instead of behaving like a UI helper, the endpoint effectively became a queryable API. Attackers did not need credentials or exploits. They simply sent well-formed requests and received well-structured responses. First, they asked the system what data exists, mapping objects and fields. Then they started pulling records in bulk. From Salesforce’s perspective, everything looked legitimate. The system was doing exactly what it was configured to do.

The real turning point came with a tool called AuraInspector, released by Mandiant to help organizations detect exactly these kinds of misconfigurations. ShinyHunters took that tool and flipped it. Instead of using it to find exposure, they used it to automate extraction. By batching requests and optimizing queries, they were able to pull large datasets quickly and quietly. In a final layer of irony, the very tool meant to highlight the risk became the one used to scale it, effectively bypassing even basic safeguards like rate limiting.

If this were only about exposed data, it would already be serious, but in this campaign the data itself was just the starting point. What was extracted from Salesforce environments like Aura’s is structured, rich, and immediately usable. Attackers pulled directly from core CRM objects such as Contacts, Accounts, Leads, and Support Cases, along with custom objects that often contain the most sensitive business-specific data. This included full names, email addresses, phone numbers, and physical addresses, alongside complete support histories, internal case notes, and customer interactions. In some cases, access extended to files and attachments, meaning invoices, documents, and internal materials that were never meant to leave the system. Altogether, this translated into millions of records, spanning customer identities, support data, and operational context, not just a leak of users, but a full exposure of how those users interact with the organization.

The type of data exposed here is exactly what powers the identity theft ecosystem. A name and email on their own may seem low-risk, but when combined with phone numbers, support history, and real interactions, they become a tool for impersonating reality. Attackers can reference actual support tickets, mention past purchases, or continue an existing conversation, making their outreach feel legitimate rather than suspicious. At that point, the attack is no longer about tricking someone blindly, but about exploiting trust built on real data.

For the everyday user, this changes the nature of the risk. It is not just about personal data being leaked, but about that data being actively used in targeted and convincing attacks. Phishing emails become harder to spot, vishing calls sound more credible, and requests for credentials or approvals feel like part of a normal interaction. What looks like harmless information quickly turns into a high-confidence attack surface, where the attacker already knows enough to lower defenses.

At the same time, that very same data can be used to target the organization it was taken from. Attackers can impersonate employees, assume internal roles, and navigate company processes with surprising ease. Customer data becomes an entry point back into the business itself, especially in environments built on interconnected SaaS systems. Once access is gained, this often leads to lateral movement, where attackers expand across platforms, deepen their reach, and establish persistence without relying on traditional exploits.

Dark web monitoring, just like Guardio offers, is not just a nice-to-have alerting layer, it is often the first signal that something has already gone wrong. It gives users visibility into exposure they cannot directly control, and a chance to react before that data is further weaponized. In a world where breaches are increasingly silent and structured, that visibility becomes critical, not as prevention, but as early detection of an already moving threat.

At the same time, this case raises a deeper question around accountability. Is it the responsibility of the company that exposed the data, or the platform that enabled it at scale? In reality, it is both. When common SaaS misconfigurations turn into repeatable attack patterns, this is no longer an isolated mistake, it is a systemic risk that demands stronger defaults and clearer boundaries.

And maybe the most important takeaway is this: we, as consumers, should start asking these questions before choosing who we trust with our data. Not just whether the service is good or the price is right, but whether we truly believe they can keep our data safe.